Opinion: Engineers can’t ignore social responsibility

“If we are going to look with pride on how our tools make positive contributions to the world, we must also accept some responsibility for how they are used to manipulate and bring misery as well” argues engineer Ben Marshall.

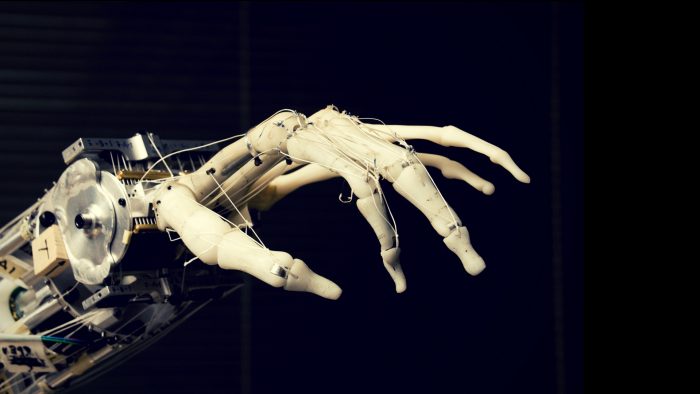

Photo: UW News (CC BY 2.0)

The Cable recently ran an excellent interview with engineers Lyndon Smith and Wenhao Zhang from the Bristol Robotics Laboratory (BRL) on their new 3D facial recognition research.

Clearly Smith and Zhang are at the forefront of their field, and their work on 3D machine vision and facial recognition is something that as an engineer (albeit in a different field) and former student at the University of Bristol, I can really appreciate as an academic achievement. I would not wish to end this piece without acknowledging this, or detract from their work.

I was glad to see them asked explicitly about the potential misuse of their work. This is often completely missed when journalists report on new research, and I believe it is especially important when considering any sort of machine intelligence or automated surveillance.

While it is heartening to see Smith and Zhang have given some thought to how people might perceive this technology, I am afraid I am much more sceptical about how such technologies are used than their comments suggest they are.

Their comments about how fast their system is suggests it is optimised for situations with many people in the scene being analysed, such as public places and transport hubs. While the ‘secure lock’ example they give is a reasonable application, I feel like it would be an over-engineered solution to a problem that does not exist.

Bluntly, a system with extremely accurate recognition of people’s faces is a tool for surveillance. Even if it is not explicitly deployed in a crime-prevention capacity (like with the ticketing example they suggest), all

of the data could still be made available to law enforcement. It need not even be surveillance by the state. Advertising firms use facial recognition techniques (though not necessarily those developed by the BRL) to track people in public places who look at their marketing campaigns. In the words of one 2015 article from Target Marketing magazine: “Consumers walking past billboards can now see and hear real-time, personalised marketing based on their genders, ages and even moods.”

Smith envisages this as a “tool to help people if they want to use it” and that “we’re not going to force people if they don’t want to”. This strikes me as naive beyond reason. I cannot opt-out of CCTV at the moment, and I don’t see how this system, if deployed widely, would be any different.

This comes at a crucial time for facial recognition technology in particular, given the Metropolitan police trialled automated, crowd-scale facial recognition and logging at the Notting Hill Carnival. While good

policing involves making use of the best tools, their methodology and lack of transparency has been explicitly called out by Paul Wiles, the UK Government Biometrics Commissioner. At the same time, the way police retain photographs of (often innocent) people has even been deemed illegal.

My point here is not that Smith and Zhang are wrong to develop this technology. Nor is it a reflection on them and the incredible work undertaken by the BRL.

My point is to take issue with engineers developing technologies without fully and honestly acknowledging the societal implications of their work. As engineers, we build tools which can be used for good and for ill. Most engineers, particularly academics, have little control over the final use cases for their work. This is important to remember, but I do not think it completely insulates us from a responsibility to consider how it affects the world. Academia is a system which rewards grant money and publications in prestigious journals; not invention for social good.

A cursory search reveals dozens of ethically dubious and alarming examples of what happens when engineers don’t consider the second and third order effects of our work. Like that time Facebook tried to manipulate the emotional state of its users to see if they clicked on more adverts, or when car companies cheat emissions testing, or how websites and mobile phone apps are designed to exploit the addictive tendencies of the people who use them, even cases when engineers with big hands design phones which are impossible to use for normal humans. In every one of these examples, engineers were among those who designed, built and evaluated these systems.

I’m not talking about individual engineers here. I don’t think anyone goes into engineering thinking we will do harm. I mean that as a profession, if we are going to look with pride on how our tools make positive contributions to the world, we must also accept some responsibility for how they are used to manipulate and bring misery as well. Usually, we can’t stop people using our tools for ill, but we should at least speak out when what we build is used without regard for the common good. We should face up to the duality of purpose which everything we build possesses. We should recognise and respond to the moral and ethical complexities of our work, not hide behind them and push responsibility onto others. In doing so, we further limit our own ability to influence how what we build is used.

If as engineers, we continue to delude ourselves into thinking we can completely abdicate responsibility for how our work affects society, then we have truly missed what it means to be part of a society, and in my opinion, the point of being an engineer.

Report a comment. Comments are moderated according to our Comment Policy.

I think you’re right-on Ben, thanks for writing this. The previous article about facial recognition seemed to allow naiveté off lightly, and didn’t address the massive social and ethical issues of that technology. Engineers do indeed need to recognise their responsibility in creating systems that create misery.