Artificial intelligence, robots, and the future of society: interview with Darren Jones

We are hurtling into a ‘Fourth Industrial Revolution’: an age of artificial intelligence, advanced robotics, and unparalleled automation in the workplace. Should we be worried? To find out, the Cable spoke to Darren Jones – one Bristol MP who is paying attention

The so-called Fourth Industrial Revolution (4IR), which we are living through the early stages of, will fully embed existing and future digital technologies in society.

As with earlier such shifts, the last of which was the ongoing ‘Digital Revolution’ that began in the 1980s, there are positives and negatives. Two troubling aspects are the rise of decision-making by artificial intelligence (AI)-powered algorithms, and automation in the workplace by robots and AI.

Let’s start with algorithms. ‘Traditional’ algorithms – formal descriptions of how people or computers should perform tasks – have been in widespread use for years. Universities, for example, use ‘degree algorithms’ to calculate final degree classifications from students’ assessed work. But AI-algorithms are created by feeding a computer program huge amounts of data and ‘teaching’ it how to behave. For instance, a facial recognition algorithm might be taught by providing the program with thousands of images and telling it which are of the same people.

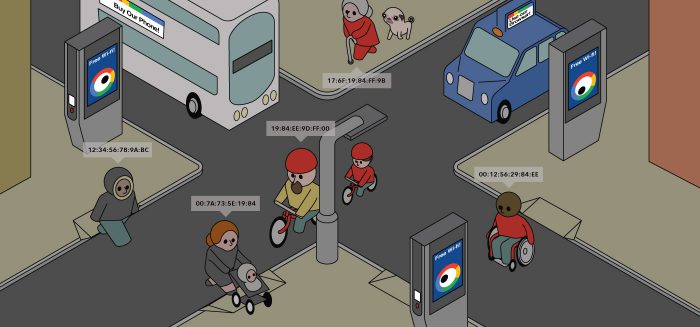

Through the ‘learning’ process, AI-algorithms pick up human biases, such as showing women job-hunters lower paid positions than men. Predicting or explaining algorithms’ decisions can be impossible, because they are based on a machine’s ‘intuition’. This complexity means they are ‘black boxes’ even to their creators. People will not always know when their data is used to train a machine, nor when they are being subjected to a machine’s decisions.

Surrounded by algorithms

Darren Jones, MP for Bristol North West, is one of few MPs with significant insight into 4IR – he leads Labour Digital, a volunteer group comprising entrepreneurs, trade unionists, academics and others, which shapes Labour policy on the subject. Jones is also a member of the Science and Technology Select Committee, one of a number of cross-party groups that grill ministers and officials on policy and spending decisions, call on experts to interrogate policies further, and publish reports to which the government has to respond. Jones’ committee has ongoing inquiries into algorithmic decision-making and biometrics and forensics.

How worrying does he find the current situation? “We haven’t had a public debate about the ethics of algorithms: lots of people don’t understand them,” Jones warns.

Such a debate is needed urgently – because AI-algorithms already surround us, without us even knowing. One of the most controversial examples is their use by police forces. Constabularies have used AI-algorithms at Notting Hill Carnival and elsewhere for real-time facial recognition. These algorithms are notoriously poor at recognising black people’s faces, increasing the risk of false positives. What’s more, since the police national database contains millions of images of people never convicted of a crime, the algorithms enable them to identify innocent people – arguably a major infringement on privacy.

Constabularies are also deploying algorithms that use crime data to predict, to within a 500 sq ft area, where crime will happen again, as well as to predict arrested people’s reoffending risk. The latter made news recently after analysis showed that adding postcodes into training data could reinforce harmful biases in policing decisions: the algorithm decided people in poorer areas would be more likely to reoffend.

As Cathy O’ Neil, a Harvard PhD graduate, data scientist, and author of the book Weapons of Math Destruction, contends, algorithms that send more police to poorer areas, and sentence residents to longer terms, can feasibly feed into other algorithms that rate the same people as high risk for loans and mortgages and block them from jobs.

The right to human intervention

The EU’s incoming General Data Protection Regulation (GDPR), Jones points out, guarantees individuals the right not to be subject to decisions made entirely by algorithms. Decisions should involve human intervention – for instance, police officers balancing algorithmic suggestions against their own judgements. The GDPR also gives people the right to challenge decisions made.

Alarmingly, however, the UK’s Data Protection Bill, currently progressing through parliament, ‘permits exemptions’ from this right.

Jones is concerned about this, and about how loosely ‘human intervention’ might be interpreted. “You can see how the police, for example, might end up becoming slack: ‘The algorithm says do this, so I’ll do this,’” he says. “Suddenly that’s seen as human intervention and the right no longer exists.” The Bill, moreover, exempts ‘immigration enforcement’ decisions from regulation. “What does that mean? It’s not defined,” says Jones. Labour has tabled amendments on these aspects, but, Jones adds, “they won’t become law unless the government supports them”.

An independent body, Jones goes on, should analyse input data, test algorithmic decisions, and check for biases. The newly-announced £9m Centre for Data Ethics and Innovation would ideally fulfil this role, but its designated remit and function is still to be decided. Moreover, it is housed in the Department of Digital, Culture, Media, and Sport – aka the ‘Ministry of Fun’ – even though, as Jones argues, “This affects every area of government and needs to be driving every area of policy.” The Centre, he continues, is “just an advisory body” – meaning the government doesn’t actually have to listen to it. Through one tabled amendment, he hopes to give it statutory influence.

Giving away public data

Shady decision-making aside, another area of concern is around companies holding onto the huge profits they can generate via AI-algorithms, which may have been trained using our data. A case in point is the NHS handing Google’s DeepMind company millions of patient records to develop AI-algorithms to spot disease, despite no revenue for the NHS having been secured in return. “We need to be aggressive on this,” Jones says. “Think about how much revenue we could generate at a time when we need to be pumping in much more money [to the NHS].”

Few parliamentarians, especially in the Commons, seem to be paying attention. “If you watch the Data Protection Bill’s second reading, there’s barely anybody there,” says Jones. Of those MPs who are engaged, he warns, “Government can choose to ignore us. The will of parliament used to be quite definitive – even if there were opposition, there’d be debate. Under this government, the Tories aren’t even turning up a lot of the time: it’s a real problem for our democracy.”

Job losses from automation

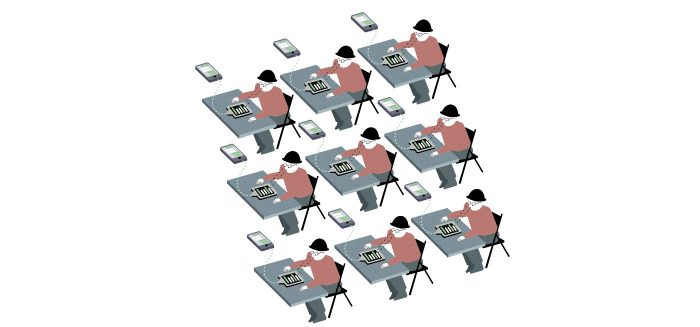

That assessment is all the more troubling given that AI-powered algorithms won’t just make decisions about our lives: along with advanced robotics, they will put people out of work. Predictions about total job losses from automation vary: the World Economic Forum expects 7.1 million losses across 15 countries by 2020, with routine office and admin roles hit hardest, followed by manufacturing and production.

“You’ve only got to go to GKN Aerospace [a manufacturing plant with about 2000 employees in Bristol] and look at wing-component manufacturing,” says Jones. “Ten years ago, it would have been loads of people with drills: now there’s whizzy robotic arms lasering things in.” Soon, driverless vehicles could drastically affect “tonnes of logistics jobs in Avonmouth”, he goes on. To protect workers, Jones suggests government and industry work together to identify jobs most at risk and find ways to keep people in meaningful work. His team wants to start a discussion on this with all 1,800 employers in his constituency. “We need to know businesses’ investment decisions, almost in real-time, so we know when jobs are going to change – before it happens,” he argues.

Jones also emphasises the importance of opportunities to ‘upskill’ and that higher education institutes like the Open University have a role to play – but he is vaguer on what that role would be. And he concedes there are no guarantees people will have access to ‘upskilling’, nor that other jobs will always be available.

So what now for policy? Jones hopes the Science and Technology Select Committee will have influence – and as well as leading Labour Digital, he’s about to launch a cross-party ‘Tech Ethics’ commission to try to influence policy “over the next parliament or two”.

It’s easy to feel reassured by Jones’ confidence – but it’s telling that his most common refrain during our interview is that “a lot needs to change”. And he and the small band of interested politicians can’t change things alone. Ordinary people will need to take notice and make some noise to ensure 4IR benefits, rather than destroys, societies and livelihoods. The Guardian’s recent investigation into the data analytics firm Cambridge Analytica – whose ‘psychological warfare tool’ has alleged links to the Trump and Vote Leave campaigns – only emphasises the urgency. In the words of Cathy O’Neil, “This is a political fight. We need to demand accountability for our algorithmic overlords.”